Self-defeating theories, part one: causal decision theory

Why the mainstream philosophical way of thinking about rational decisions is self-defeating.

[If you have not heard of the Newcomb problem, this post will probably not make a lot of sense. A good introduction might be reading pp.146–154 of James M. Joyce’s The Foundations of Causal Decision Theory, or listening to this episode of the Rationally Speaking podcast.]

§1.

It’s fairly well-known that a causal decision theorist might have reasons—reasons justified by causal decision theory—to commit to not doing what CDT would otherwise prescribe. Specifically, if they could successfully commit themselves to one-boxing in the Newcomb problem, then they would find $1,000,000 in the opaque box, and this would be down to the causal influence of their precommitment: the predictor, presumably knowing about this commitment, would predict that they would one-box because of the commitment.

This is pretty much the only piece of consolation that causal decision theorists have in the face of the riches of one-boxers, ever since David Lewis correctly showed that the standard response to the ‘Why Ain’cha Rich?’ question is just “one more piece of two-boxist doctrine that one-boxers may consistently deny”. The precommitment strategy is an attempt from causal decision theorists to have their cake and eat it: retain CDT and yet also match the millions that the evidentialists rake in every time they face a Newcomb problem.

But the commitment in question can’t just involve swearing that you will one-box. Indeed, it isn’t even enough to tell everyone that you will one-box, or to give your spouse a t-shirt that says “my husband1 is a one-boxer”, or even to get the letters ‘ONE BOX’ tattooed on your knuckles. If you’re a strong enough causal decision theorist then, when you see the boxes in front of you, you will nevertheless come to think, “Well, I’m here now, I may as well take the extra thousand.” And the opaque box will be empty, because the predictor knew you would do that.

Indeed, almost every currently working causal decision theorist would walk away from a Newcomb problem $999,000 poorer than they might have been. Why? Because even though they agree that pre-committing to one-boxing is the correct decision, everyone knows that their theory also tells them to break this commitment in the moment of decision if they realise that they can; and there is no fucking way a decision theorist who realises that they are facing a Newcomb problem doesn’t also realise that it’s open to them to two-box.

The point is that being a strong enough causal decision theorist might make it impossible to commit to one-boxing. (I say ‘might’ because I don’t think my arguments up til now have been airtight; at the end of §3 I upgrade this conclusion to a ‘will’.) But committing to one-boxing is exactly what CDT tells you to do. Does this mean CDT is self-defeating?

§2.

Nate Soares writes that “Most problems that humans face in real life are ‘Newcomblike’”:

Newcomblike problems occur whenever knowledge about what decision you will make leaks into the environment. The knowledge doesn't have to be 100% accurate, it just has to be correlated with your eventual actual action (in such a way that if you were going to take a different action, then you would have leaked different information). When this information is available, and others use it to make their decisions, others put you into a Newcomblike scenario.

I would recommend reading through the entire post (it’s relatively short, although readers may also have to look at the previous (also short) post to learn some of his jargon). But the basic idea is that a hell of a lot of human decisions have the following causal structure:

For ‘microexpressions’ here, you can substitute your favourite causal intermediary by which other agents can guess what you’re going to do: there are loads, from first impressions to personality traits to persistent desires to values to the circumstances you put yourself in to actual microexpressions. All of these are reliably correlated with your decision: not 100%, but enough that it can create a Newcomb problem. When others alter the payoff structures you face based on their prediction of your actions, which they do regularly, they create a Newcomb problem.

Your are constantly “leaking information” about how you make decisions to other actors, who then base their choices off this information. Thus, a causal decision theorist will leak information saying that they’re a causal decision theorist, and this is enough for others to be able to trap you in Newcomb problems. Sometimes, this will be a case of them exploiting you, but in other cases it’s just a case of the normal reactions of decent human beings:

I know at least two people who are unreliable and untrustworthy, and who blame the fact that they can't hold down jobs (and that nobody cuts them any slack) on bad luck rather than on their own demeanors. Both consistently believe that they are taking the best available action whenever they act unreliable and untrustworthy. Both brush off the idea of “becoming a sucker”. Neither of them is capable of acting unreliable while signaling reliability. Both of them would benefit from actually becoming trustworthy.

For both of these individuals, acting reliably might be weakly dominated by acting unreliably in the moment of decision given their payoff structures; but these payoff structures depend on others’ predictions of their reliability, and if others predict they will act reliably then they will be provided with better opportunities. This is a Newcomb problem.

Soares gives a number of other examples, which are worth reading, but the general point is as follows:

In fact, humans have a natural tendency to avoid “non-Newcomblike” scenarios. Human social structures use complex reputation systems. Humans seldom make big choices among themselves (who to hire, whether to become roommates, whether to make a business deal) before “getting to know each other”. We automatically build complex social models detailing how we think our friends, family, and co-workers, make decisions.

When we make decisions based on how we expect others will behave, we can trap them in Newcomb problems. And we do this all the time. Speaking very roughly, “CDT fails when it gets stuck in the trap of ‘no matter what I signaled I should do [something mean]’, which results in CDT sending off a ‘mean’ signal and missing opportunities for higher payoffs.”2

How might a causal decision theorist deal with this situation? Again, the general solution is to commit to one-boxing: that is, manage to hold yourself to doing something even though first-order CDT considerations recommend against it. But again, it’s not enough to tell yourself to behave less mean, to promise you’ll be less mean, to write a whining blog post about how hard you’re working to be less mean. An agent who genuinely and sincerely believes CDT will do all these things; but in the moment of decision, thinking about what would cause the best outcome, they pick the mean option. And the people around them knew they’d do it.

Let’s say that a situation ‘breaks’ CDT when CDT recommends that an agent commits, ahead of time, to not doing what CDT prescribes in that situation. I use the word ‘breaks’ because I think it’s accurate: a genuine and sincere CDT-following agent will end up in a practical contradiction here, because they have no way to reliably pre-commit to the decision as their belief in CDT will undermine their commitments. They cannot do what CDT prescribes precisely because they believe in CDT.

If CDT only broke some of the time, it would not necessarily be self-defeating: even though sometimes the theory recommends doing something that is incompatible with believing the theory, these scenarios are rare enough that on balance CDT gives positive weight to believing the theory. But every Newcomb (or ‘Newcomblike’) problem breaks CDT, and these problems are the norm in human society. In ordinary everyday life, CDT regularly recommends courses of action that causal decision theorists cannot actually follow. Thus, if we plug the decision “should I believe CDT?” into CDT, the frequency with which the theory breaks means that CDT will recommend that we don’t believe it; we’d be better off with a decision procedure that directly tells us to one-box. CDT is self-defeating.

§3.

You may still not be convinced that a sincere causal decision theorist couldn’t pre-commit to (the analogue of) one-boxing even in these human scenarios. After all, there seems to be a fairly easy way to commit to one-boxing in the original Newcomb problem. Write a cheque for $1,000, made out to your country’s Nazi party, and make a friend you trust promise to send it if you two-box.3 In the moment of decision, CDT now recommends against two-boxing (no monetary gain plus the moral loss of giving money to Nazis), so the worry of the seemingly-committed one-boxer facing the problem and going “well, I might as well take the extra thousand” disappears. And the predictor will see this commitment, know that you’re going to one-box, and put a million in the opaque box. The causal decision theorist wins!

This is a costly commitment, whereby you ensure that two-boxing becomes more costly (at least up to the equivalent of $1,000). If you make a costly commitment, then unlike with a regular commitment, CDT will actually recommend one-boxing in the moment of decision. Thus, this is a reliable way to commit to one-boxing, even for the causal decision theorist. And because of this, and because CDT recommends committing to one-boxing, then CDT will recommend that you make costly commitments. Every part of this plan is both possible for the sincere causal decision theorist and recommended by CDT: ‘costly commitments’ like this can genuinely work, and the Newcomb problem will no longer break CDT.4 So philosophers might ask: why can’t we make costly commitments in more general ‘Newcomblike’ scenarios?

It’s very easy to get into the weeds in these kinds of arguments: indeed, to presage a later point, they tend to mimic discussions about the place of indirect considerations (like rules or dispositions) in utilitarianism. So let’s zoom out a little.

CDT tells agents to treat their actions as interventions. In the terminology of causal modelling, an intervention is (roughly speaking) just some causal process that is suitably independent of the system it acts on and thus breaks some of the causal connections within that system. An intervention comes from outside the system and upsets the regular correlations within the system, so when considering interventions we have to analyse the specific causal aspects of the process rather than just statistical regularities. This is exactly how CDT tells you to think about your actions: you consider only what is within causal reach of your decision, not anything that is merely correlated with it. That CDT identifies actions as interventions has become accepted among philosophers: for example, the Stanford Encyclopedia of Philosophy says that “The central idea [of causal decision theory] is that the agent should treat her action as an intervention.”

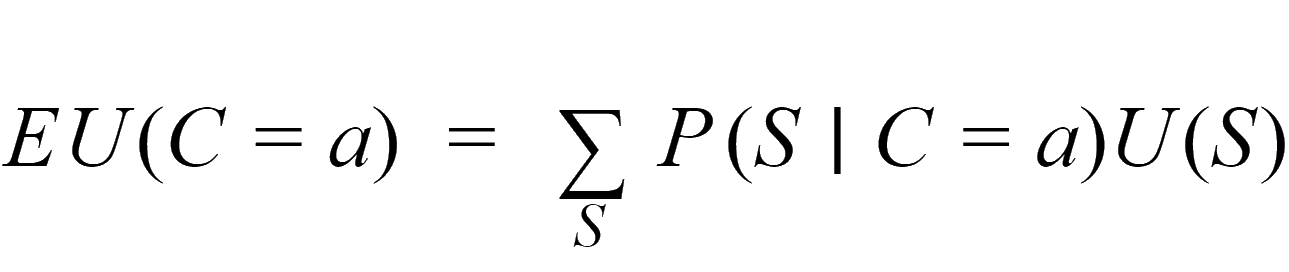

Putting it more formally (skip this bit if you would rather avoid the technical stuff), the traditional way of defining expected utility—the thing to be maximised—was with this equation (letting C be the variable representing your choice, a be the action under consideration, and S range over states of the world):

CDT tells us that we should instead define it as:

The do operator here signals that an intervention has occurred that has ‘set’ the value of the variable C to C=a. If we graph the causal structure under question (in the way that Soares does—see above), an intervention on C can be modelled by removing all the arrows that point towards it, so that its value has been set to C=a without changing the values of any of its causal antecedents. This breaks the correlations between this variable and its causal ‘parents’. The ‘naïve’ definition of expected utility took into account mere correlations between your action and states of the world because it used ordinary conditional probability; including the do operator in the equation avoids this.5

The problem, however, is that actions are not (in general) interventions. While interventions break correlations, actions (in general) do not, because they are not independent of the world we are acting on. In the original Newcomb problem, if choosing to two-box were an intervention then two-boxers would find a million in the opaque box just as often as one-boxers do; but they don’t. More generally, our actions are reliably (not 100%, but reliably) correlated with the circumstances we act in and the character traits and quirks we possess. Human society essentially relies on this fact for forming stable relationships and institutions, and indeed we rely on it when thinking about other people’s choices, even if we sometimes forget it in the first-personal case (more on this in a future post in this series).

There are, of course, many cases where human actions are and should be modelled as interventions; but these just are the cases where our actions are sufficiently causally independent of the system we are analysing. We can treat the experimenter’s decision to give one group a pill and the other a placebo as an intervention, but only because it is appropriately exogenous to the biological system under study. The whole point of the Newcomb problem is to construct a case where the agent’s decision is not appropriately exogenous to the system under analysis; and the whole point of Soares’ argument is to show that such situations are normal in society.

This is why non-costly commitments are never a reliable solution to Newcomblike problems for causal decision theorists. If you treat your action as an intervention, then you must believe that all the arrows going into your decision have been broken—including any that might point from the commitment. CDT tells you to view the commitment as just a background fact of life that you cannot causally affect, which (if it doesn’t affect the payoff structure of the problem because it is non-costly) means it is irrelevant. The only way around this is to not view your action as an intervention, so that the commitment can possibly become relevant; but this just is not to believe CDT, as what distinguishes CDT is that it tells you to treat your actions as interventions.

And this is also why costly commitments don’t work in general. Sure, to argue this I could have brought up an example which rules out costly commitments by design (like some versions of Gregory Kavka’s toxin puzzle), but it would be easy to get lost arguing about the niceties of the thought experiment. By zooming out, we’ve seen the more general issue at play here. Human decisions are not (in general) interventions, and this includes the decision to make a costly commitment. Whether or not you make a costly commitment can be correlated with features of the system you are acting on; in other words, you can get trapped in a Newcomb problem while trying to make a costly commitment to one-box on a different Newcomb problem. Friendship and gambling are the most common systems decision theorists rely on to construct the right kind of side bets for costly commitments; both rely very strongly on the causal structure described by Soares in order to operate—you will find few friends or gambling partners if you’re known to be untrustworthy. Unless they find some friends or gambling partners in the first place, the causal decision theorist can’t commit to one-boxing; but, as Soares argues, they cannot find friends or gambling partners unless they’ve committed to one-boxing. We cannot expect, in general, that there will be opportunities to make costly commitments to avoid Newcomb problems.

So costly commitments are not a solution in general, and non-costly commitments are never a solution: believing in CDT will causally generate fewer opportunities to win in Newcomb problems. And since Newcomb problems are so widespread, the course of action that causally generates the most utility—that is, the course of action prescribed by CDT—is to not believe CDT. The theory is self-defeating.

[Edit 03/10/2021: I think there is a subtle lack of clarity in my terminology in this post, which I have only now noticed. Ignore this if you don’t care about terminological quibbles.

A Newcomb problem, as philosophers use the phrase, is a situation where one’s actions provide evidence for some state they do not causally bring about. A correlation between your actions and the state of the world is a necessary, but not sufficient, condition for a scenario to be a Newcomb problem in this sense. For sufficiency, you need to add that the agent is relevantly uncertain about the state of the world. Consider the original problem, but modified so that neither box is opaque and you can see whether the first box contains $0 or $1,000,000: while the correlation is still present (guaranteed by the actions of the predictor), your action cannot provide any new evidence about what’s in the box, so philosophers would not consider this a Newcomb problem.

In this post, however, I have tended to use ‘Newcomb problem’ to refer to any situation with the relevant causal structure, even if it doesn’t also have the requisite uncertainty. For instance, I said that Soares’ unreliable acquaintances face Newcomb problems; but in the moment of any given decision, it is likely that they know the options they face (their choice doesn’t give them any new evidence), and so philosophers would not call this a Newcomb problem.

I don’t think this terminological choice affects my arguments - CDT still demands pre-commitment in these situations, so they do break the theory in the way I discussed - but I should probably have used Soares’ term ‘Newcomblike’ instead.]

The demographics of decision theory aren’t exactly balanced.

Obviously, this is significantly oversimplified and very vague, especially since one can plug morally-laden utilities into CDT. But it’s meant only to help the reader’s imagination conjure up common scenarios that are Newcomb problems in exactly this way.

I take this specific strategy from A. J. Jacobs’ Drop Dead Healthy.

Specifically, I defined ‘breaks’ as follows: “a situation ‘breaks’ CDT when CDT itself recommends that an agent commits to not doing what CDT prescribes in that situation.” A costly commitment, unlike other forms of commitment, actually changes the situation (the possible payoffs) so that CDT does prescribe the option that the agent has committed to. So costly commitments help the agent avoid situations that would break CDT.

For more on this way of thinking about CDT, see chapter 4 of Judea Pearl’s book Causality, or follow up the citations in §4.8 of the SEP article on Causal Models.