Let me start with a fable.

A group of children are playing with a set of blocks. They have observed that they can group these blocks into two sets of two. Building on this fundamental observation, they have figured out how to rearrange the blocks, so they’re grouped into a different pair of sets; they have stacked them on top of each other for practical purposes; they have even made a detailed record of the blocks’ colours. But for some reason, they have not figured out that the total number of blocks is four.

It’s not that they think there are more or less than four blocks, it’s just that they never get around to properly asking the question of how many blocks there are in total. They can see two blocks over here, and two blocks over there, but for some reason they just can’t bring these thoughts together in the right way. They’ve brushed up against the question a few times—maybe they’ve figured out through trial-and-error that you can give four children exactly one block each, and you’ll have no blocks left—but they’ve not actually got the idea ‘there are four blocks’ in their heads.

This, of course, would result in some confusion (something a little like the Piaget conservation tasks). Eventually, an adult begins to watch the children playing with their blocks and notices their confusion. To help them out, the adult asks: ‘How many blocks are there?’ The children respond, ‘We don’t know.’ So the adult guides them through: ‘Well, there are two blocks here [points], and two blocks here [points], so that means there’s four blocks altogether, right?’

The adult then leaves to attend a conference on Bohmian mechanics, and the children are left alone. They mutter to one another: ‘This “2+2=4” business would be an astounding theory if it were true! But there’s no experimental evidence yet.’ One enterprising child decides to put the theory to the test: they bring the two pairs of blocks together, and begin to count: ‘one… two… three… FOUR!’ They run to tell their fellow children about this fantastic new experimental result, and the other children celebrate it as one of the greatest new findings in the field of playing with blocks. The experimental child is praised as a truly great mind, and they are later awarded the Nobel Prize in Block-Playing for ‘experiments with double-paired-blocks, establishing the 2+2=4 equation’.

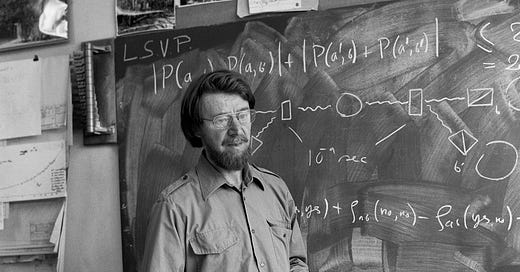

In this fable, the silly children represent the physics community, and the adult represents one of the truly great thinkers of the twentieth century: J. S. Bell.

Bell’s theorem

There is an implicit model of the relationship between theory and experiment in physics, which goes something like this. Theoretical physicists use intuition and mathematics to design a hypothesis about how the world works—a theory of some fundamental physical phenomenon (say, gravity, or electromagnetism) that makes testable, empirical predictions about it. And then, the experimentalists go out and test those predictions. If the predictions are validated, this is evidence for the theory; but if the predictions are wrong, this is evidence against it. This model is a lot like (a very simplified version of) Karl Popper’s philosophy of science, and the best example of it is the case-study Popper was inspired by: Albert Einstein coming up with thought experiments about rockets, using them to derive predictions about the bending of light in space, and having these predictions confirmed by experiment.

Bell’s theorem, J. S. Bell’s most celebrated intellectual contribution, is one of the most remarkable pieces of theoretical physics of the twentieth century. But it absolutely does not fit into this story. Bell was concerned less with coming up with a new theory than with clarifying some implications of an already well-established theory—in this case, quantum mechanics.

Bell’s central claim is this: quantum mechanics is a nonlocal theory. ‘Non-local’ here means that the theory allows information to be transmitted arbitrarily quickly—faster than the speed of light. Event A can be caused by event B even if B happened an arbitrarily short time in the past, faster than any physical signals could be sent from one location to the other. Bell aimed to show that nonlocality, in contradiction to the intuitions of physicists everywhere, is an ineliminable part of quantum mechanics.

Bell’s theorem is mathematically true

Bell’s proof had two stages. First, he derived a set of inequalities—the ‘Bell inequalities’—that he argued would hold in any purely local theory (meaning a theory that respected the speed of light). Second, he proved with logical precision that quantum mechanics did not satisfy the Bell inequalities: in certain situations, quantum mechanics predicts that physical systems violate the Bell inequalities. All local theories respect the Bell inequalities; quantum mechanics does not; therefore, quantum mechanics is a non-local theory.

The first part of Bell’s argument is open to philosophical quibbling: some people (notably, many-worlds theorists) think he made some assumptions that were overly strong, and they argue that you can design a purely local theory that doesn’t respect the Bell inequalities. But nobody has any objections to the second part of the proof: it is unquestionably true that quantum mechanics violates the Bell inequalities. This is just a matter of logical fact, something you get pretty immediately by plugging the right numbers into the theory. There is no way that quantum mechanics could be true without the Bell inequalities being violated.

Let me be very clear about this. The fact that the Bell inequalities are violated is logically entailed by quantum mechanics. This is a mathematical, logical, analytic truth. As a corollary, then, we can note that even before Bell put pen to paper, physicists already had mountains of evidence for Bell inequality violations. This is because you cannot have quantum mechanics without Bell inequality violations, and so every single piece of evidence for quantum mechanics they had was, ipso facto, evidence for Bell inequality violations!

The problem was just that before Bell, physicists were confused. They had plenty of evidence for Bell inequality violation staring them in the face; but, like the children in my fable, they simply could not put two and two together. Even truly great physicists like Einstein struggled to grasp the very basic fact that quantum mechanics is unavoidable nonlocal. Bell’s contribution was to clear up this confusion by clearly laying out a logical fact: quantum mechanics logically entails Bell inequality violations, which seem incompatible with locality.

Unfortunately, this contribution did not have the desired effect.

Physicists still don’t understand Bell’s theorem

The above explanation of Bell’s theorem is not hard to come by: you can find a much better version of essentially the same explanation on the SEP page on ‘Bell’s theorem’, for instance. But it’s not the explanation you’d get from the average physicist or physics writer. Their explanations tend to be much more overblown, and involve much more hand-waving about ‘local realism’ and ‘hidden variables’.

‘Hidden variables’ is the idea that the wavefunction—the mathematical object that quantum mechanics uses to describe the current state of a physical system—is ‘incomplete’: it only describes part of physical reality, not the whole thing, and there are other relevant variables (which may or may not be ‘hidden’ from us). Since the wavefunction is often thought to be scary and unreal—a very silly approach to quantum mechanics, but an unfortunately common one—the idea that it only gets at part of the truth is associated with ‘realism’, the position that reality is in some sense ‘objective’ and independent from measurement: the other variables are taken to represent the more fundamental reality.

As should be clear from the scare quotes galore, this whole discourse is a minefield of sloppy reasoning, imprecisely-defined terms, and proof-by-association. Realist theories don’t have to have hidden variables, hidden variables theories don’t have to be realist, and none of this has much to do with Bell’s theorem at all. But physicists commonly try to connect all of this mess with the idea of locality, by interpreting Bell’s theorem as an argument against local realism.

As rude as this is going to sound, I think the reason for this confusion is just that physicists struggle to follow complex yet precise mathematical reasoning: they’re just not trained for perfectly logical precision the way mathematicians and analytic philosophers are, often using intuitive notations and conventions at the expense of exactness and perfect coherence (e.g., the Dirac delta). Bell’s proof did discuss hidden variables, but in the conclusion, not in the premises; physicists just didn’t pay enough attention to this distinction.

Here’s how that worked. For reasons of presentation, when laying out his theorem Bell separated two different cases: he first argued that theories that are local and have no hidden variables are inconsistent, and then argued that theories that are local and do have hidden variables are also inconsistent. When stated in this form, this is obviously an argument that locality is the problem and hidden variables irrelevant. But, influenced by a thought experiment proposed by Einstein and two co-authors, Bell presented the former line of argument in the form: ‘all local theories must have hidden variables’. Logically speaking this is the same claim, but Bell’s formulation pattern-matched very strongly to Einstein et al.’s argument that we must add hidden variables to quantum mechanics to preserve locality. Physicists thus read the second part of Bell’s argument, the proof that local hidden variables theories don’t work either, as a refutation of Einstein et al. rather than as a generalisation of Einstein et al.’s thought experiment; they failed to see that the first part of Bell’s argument was as important as the second.

And so, Bell’s theorem became identified with the denial of local realism rather than the denial of locality tout court; a special case of Bell’s theorem got mistaken for the thing itself, and its significance was lost—physicists thought they could keep locality by dropping hidden variables. This interpretation might be an understandable mistake to have made in context; but, when the proof is treated with more logical precision, we see that it is clearly a mistake.

Are you still unconvinced that Bell’s theorem has nothing to do with hidden variables? Well then, how about an argument from authority: the most prominent hidden variables theorist after the publication of Bell’s theorem was a Northern Irish physicist by the name of… J. S. Bell. For much of his life Bell held to the pilot-wave interpretation of quantum mechanics, on which the wavefunction acts as a sort of ‘pilot’ guiding particles through space; the positions and velocities of the particles are the extra variables. Bell proved that any hidden variables theory would have to be non-local—and indeed, the pilot wave theory is non-local—but he also proved that any theory without hidden variables would have to be non-local. The hidden variables are just a separate issue, which Bell’s theorem tells us nothing about.

But ultimately, none of this is the most fundamental issue with how physicists approach Bell’s theorem. Even if ‘hidden variables’ and ‘realism’ were banished from discussion, and physicists realised that Bell’s argument was just about locality, they’d still be misunderstanding the theorem on a fundamental level.

‘Empirical confirmation’

Bell’s proof is just that—a proof. You can quibble with his claim that the Bell inequalities define locality, but you cannot dispute that quantum mechanics violates the Bell inequalities; that is a mathematical fact about the theory. As a result, experimental evidence is a little irrelevant. Once you have evidence for quantum mechanics, you ipso facto have evidence for Bell inequality violation; you don’t need to do any more experiments. It would be like doing experiments to ‘confirm’ that you have four blocks total, when you have already experimentally demonstrated that you have two sets of two blocks.

Yet, experiments were done nonetheless. Starting in the 1970s, people went out and looked at quantum systems, double-checking their numbers against Bell’s theorem, and lo and behold! the Bell inequalities were violated. It took quite a while for all the possible loopholes in the experimental set-ups to be closed, but eventually they were, and we had undeniable empirical evidence that the Bell inequalities are violated.

But wait—hadn’t Bell already mathematically proved this? Yes, he had. With the force of logical necessity, he had demonstrated that the Bell inequalities were violated in quantum-mechanical systems. This wasn’t an uncertain hypothesis, it was a logical deduction from an already well-established theory. The experiments added nothing.

Crucially for the philosophers in the audience, these experiments didn’t settle the philosophical quibbles discussed above. These experiments showed only that the Bell inequalities are violated. But if you are a many-worldser or a superdeterminist, you agree that the Bell inequalities are violated; you just don’t think that this rules out locality. And the question of how to best interpret locality is one for philosophical analysis, rather than physical experiment.1 These experiments are irrelevant to the bit of Bell’s argument that is potentially controversial, and are only relevant to the bit that had already been mathematically proven. So, so what?

Now, maybe these experiments should be seen as tests of quantum mechanics broadly, rather than of Bell’s theorem specifically. This way of interpreting the experiments is entirely reasonable: Bell’s theorem only tells us that Bell inequality violations are necessary if quantum mechanics is true, and we need experiments to check that it actually is true. But even then, these experiments are hardly the most striking or interesting evidence for quantum mechanics! By the time Bell was proving his theorem in the 1960s, never mind by the time these experiments were being carried out, quantum mechanics was already a well-established part of the physics curriculum, and it had been tested to within an inch of its life. These experiments gave physicists very little evidence for quantum mechanics, when compared with the mountains of evidence they already had.

It is, of course, important to do experiments to double-check all the testable predictions of a theory, or at least as many as possible. This is just good scientific practice. So I don’t want to complain about the fact that the experiments were done; I just want to complain about the fact that they were taken to be so shocking. Bell inequality violation should have been the null hypothesis for these experiments, the default option that would hardly require us to update our beliefs at all. Yet physicists, in general, did not react like this: they thought, and still think, that these experiments were shocking and deeply important.

And eventually they got around to awarding the highest award in physics to some of the experimenters. Alain Aspect, John F. Clauser, and Anton Zeilinger were awarded the 2022 Physics Nobel (an award that Bell himself never received) ‘for experiments with entangled photons, establishing the violation of Bell inequalities’. The committee drew special attention to the relevance of their experimental work for establishing that modern physical accounts ‘cannot be replaced by a theory that uses hidden variables’.

Despair

The failures of understanding here are immense. But the biggest failure of all is: the physics community thinks that Bell’s theorem is a testable hypothesis. They’re still working with the quasi-Popperian model somewhere in the back of their mind, and still think model this as ‘Bell proposed the hypothesis that these inequalities would be violated, and then the hypothesis was tested’. This is the only way that someone who accepts quantum mechanics as a valid physical theory could be even slightly surprised by Bell inequality violation, and the only way they could think that experiments testing the Bell inequalities are Nobel-worthy.

We have already seen how untrue this is: Bell did not hypothesise anything, he proved that any theory that predicts the same phenomena as quantum mechanics must violate the Bell inequalities. But more than just being untrue, it is silly: physicists are treating a logical certainty as if it were a wild new proposal, just like the children in my fable.

Today, physicists are still approaching theory and experiment in a quasi-Popperian manner, proposing new ‘falsifiable’ hypotheses and then going out and testing them. This is a fine model for science to follow some of the time; but when nobody really believes the new theories they propose, when they are falsified as a matter of routine, and when the whole process becomes rote rule-following without any gain in knowledge, it becomes a problem. At that stage, the existing process has been exhausted, and a new methodological approach is called for.

One such methodology for theoretical physics is to be more like analytic philosophy: rather than proposing wild new theories, physicists should look closely at well-confirmed existing theories, using conceptual analysis to better understand their meaning and consequences. Many physicists would be horrified at the idea that they should be following philosophers, but this was exactly the approach taken by one of the true greats of their profession: J. S. Bell. Bell’s theorem might not look much like Russell’s ‘On Denoting’, but it is perhaps the single most successful piece of conceptual analysis of the twentieth century.

The most depressing aspect of Bell’s reception is that physicists can’t grasp this: they have assimilated his work into the quasi-Popperian paradigm, turned his deep and fascinating result into a facile ‘hypothesis’ that can only be taken seriously after it has been subject to ol’ reliable, attempted falsification. And with this year’s Nobel prize, they have once again proven that they are like those silly children: even after having their own system explained to them, they fail to grasp the nature of what they have been told. Sixty years ago, physicists didn’t understand Bell; today, they still don’t understand him.2

Philosophers of physics, I am well aware that there are many potential objections to the sentences I just wrote (many-worlds theorists don’t accept that there is such a thing as ‘the’ outcome of an experiment and thus might not accept that ‘the’ outcome of these experiments is Bell inequality violation; there could be empirical evidence for certain interpretations if they make testable claims about empirical correlations; etc.). Your discipline is difficult to write about! Just read this paragraph as if it already included all the qualifications needed to make it true.

Postscript on quantum information theory

I only quoted part of the Nobel committee’s reasoning for their 2022 physics prize earlier; the full text states that Aspect, Clauser, and Zeilinger were awarded the prize ‘for experiments with entangled photons, establishing the violation of Bell inequalities and pioneering quantum information science’ (emphasis mine). I largely ignored this last bit in the main text, but I should say something about it now.

Quantum systems have some interesting information-theoretical properties, which can in principle outperform standard computing systems. In particular, the systems of ‘entangled’ particles that Bell described when proving his theorem can theoretically be exploited to produce incredibly efficient computers, running much faster than any ‘classical’ computer and able to break standard methods of encryption quite easily.

Quantum information theory and quantum computing are really fun theoretical topics to dive into, but you don’t get a Nobel for fun! However, if there were some way to practically implement a real system that could outperform classical computers, this would be really important and potentially Nobel-worthy. And this would be the kind of thing that experiments with entangled systems really would be necessary for understanding: you’d need an empirical engagement with the systems, not just mathematical theorising, to know on a practical level how to use them.

But ultimately, the experiments done by this year’s Nobel winners did not teach us very much about how to build a working quantum computer that can compete with classical computers along the margins we care about. Quantum information theory is still confined to fun intellectual flights of fancy, or very precise artificial conditions; its practical, real-world impacts are thirty years away and always have been. The information-theory aspects of Bell’s theorem are at best a fun sideshow, at worst a distraction from the deep and powerful lessons about the world that we should learn from it.